FOR many types of music, the transposition level of a given piece or work (i.e. the tonal key or pitch-class center) is assumed to be fixed. Performers have excellent reasons to consistently perform songs/pieces at the same transposition level, including the tactical means necessary to perform the piece, limitations of a vocal or instrument pitch range, and affective expressiveness, to name a few. On the contrary, there are also reasons why works are sometimes transposed higher or lower in pitch, especially when arranging tunes for different instruments or ensembles. However, the normative assumption for listeners is that a given musical work will be performed in the same key across multiple performances.

Perceptual research has shown that listeners are quite capable of recognizing transposed melodies. Listeners use a variety of cues to recognize a musical tune, including melodic and harmonic interval information (Bharucha & Krumhansl, 1983) and/or timing and rhythmic information (Dowling & Fujitani, 1971; Warren, Gardner, Brubaker & Bashford, 1991)—features which do not change when a tune is transposed up or down in pitch. Research by Edworthy (1985) found that, even when a musical work is transposed, melodic "contour information is immediately precise" (p. 375), suggesting that even when pitch transpositions are introduced, melodic contour facilitates tune recognition.

The ability of listeners to identify transposed recordings of music has been investigated via a variety of methods. One popular methodology for addressing this question has been testing the ability of listeners to recall the starting pitch of a known melody without any pitch reference. Halpern (1989) found that non-AP (Absolute Pitch) possessors were consistent (less than 2 semitones) when producing the starting pitch for known tunes from memory, both within and between testing sessions. Similar research by Levitin (1994) also found evidence that non-trained listeners were fairly accurate in recalling the original transpositions of known tunes; participants in that study reproduced melodies within 2 semitones on approximately 44% of all trials. Both of these studies suggest that non-AP listeners may encode the approximate transposition level of a song absolutely, despite not having the ability to explicitly state the specific transposition/key. However, a recent replication study by Jakubowski and Müllensiefen (2013) found that absolute pitch memory might not be as accurate as predicted by either Halpern (1989) or Levitin (1994). With regards to recognizing alterations of pitch, a study by Schellenberg and Trehub (2003) found that listeners perform at better than chance levels when identifying semitone and whole-tone transpositions of known tunes, but perform at less than chance levels for unknown works. This research provides strong evidence that even nonmusicians are likely to possess "good" absolute pitch memory (Schellenberg & Trehub, 2003).

Historically, listeners (as opposed to performers) have not had any control over the pitch transposition of a piece. In this manner, listeners have often been modeled as the "passive" end chain of the musical communication process (Kendall & Carterette, 1990). However, the rise of digital audio has made it possible for listeners (as well as composers and performers) to easily alter audio recordings in various ways, including transposing a song to a different key, changing the tempo, remixing or mashing different songs, etc., thus affording listeners various opportunities to "customize" their listening experience. Software for capturing and altering digital audio is freely available and easy to use. Changing the transposition or tempo of a digital audio file requires only a few simple mouse clicks. Surprisingly, very few studies have addressed the prevalence of digital audio alterations introduced by listeners; the research presented here investigated this specific question.

Listener alterations of music may go unnoticed by researchers due to the local scope of their influence. A listener that alters the tempo or transposition level of a musical work is likely to do so for personal motivations, leaving little reason to express or share the modified work with a larger audience. Fortunately for researchers, advances in digital content sharing, especially public content sharing, have created an opportunity to study the ways in which listeners alter musical works. Of the many possible content-sharing venues, this paper will focus solely on alterations made within user-posted YouTube videos. The use of YouTube data as a scientific tool has been referred to as YouTubeology (Jenkins, 2006). The short glimpses that YouTube users share via the web contain a wealth of knowledge that may be useful for addressing a variety of research questions, especially questions pertaining to music consumption. A popular quote by Lev Grossman (2006) describes the power of this field:

"You can learn more about how Americans live just by looking at the backgrounds of YouTube videos—those rumpled bedrooms and toy-strewn basement rec rooms—than you could from 1,000 hours of network television."

Music is often ranked as one of the largest content categories on YouTube, with estimates that music accounts for between 19 and 31% of all content (sysomos.com, 2009 report). Music posted on YouTube has been useful for various research questions, including descriptive analytics on music therapy sessions (Gooding & Gregory, 2011), case studies on musical artist growth and development (Cayari, 2011), musical half-life (Prellwitz & Nelson, 2011), and user-comment sentiment strength (Thelwall, 2012), to name a few. The use of YouTube data to address musical questions is in a relatively nascent stage, and to date, no known studies have addressed the ways in which YouTube users manipulate existing musical material.

The case study provided here stems from an investigation that began in 2011. At that time, anecdotal evidence collected in a private guitar studio suggested that user-posted YouTube music videos frequently contained changes of pitch, tempo, or both. YouTube videos were often in a different key when compared against student tablatures and sheet music, making these alterations particularly noticeable. In a few exceptional cases, user-posted videos explicitly stated their motivations for modifying the musical works (e.g. to avoid copyright infringement detection). As an initial step towards understanding the prevalence of YouTube user-posted modifications, a small corpus study was devised. A collection of user-posted YouTube videos was collected and then compared against "official" artist recordings.

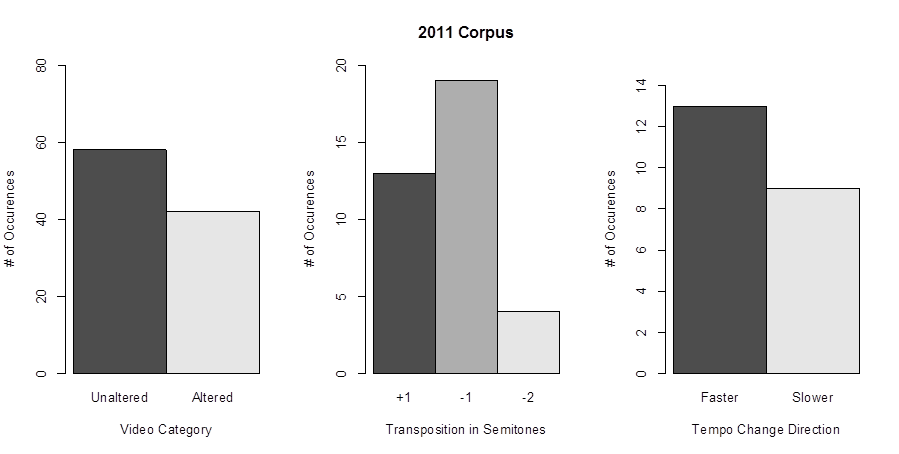

Study #1: 2011 Corpus

Acquisition

A total of 100 user-posted videos, 10 versions of 10 different songs, were chosen to serve as a small corpus for investigating the prevalence of pitch and tempo alterations on YouTube. The 10 chosen songs were taken from the Rhapsody Music Charts top 10 tracks for the week of September 22, 2011 (see appendix for track list). For each song, 10 unique user-posted versions of the song were recorded from unofficial YouTube sources.

Official recordings of each track were obtained via a commercial music subscription service (Rhapsody) in order to establish baseline measurements. Thereafter, user-posted lyric videos were collected by searching for the following pattern within YouTube: <song title> <artist name> "lyrics." The first 10 user-posted hits (as opposed to official videos posted by record labels) were recorded for further analysis. User-posted videos were excluded if: they did not contain the actual artist's recording (cover videos), the vocal track had been removed (karaoke videos), the track was labeled as a live or acoustic version, or if the title of the video explicitly stated the video was a "chipmunk," "Minion," or "reversed" version of the track. Videos with subtitles in other languages were included in the corpus, as long as the original audio track was used. Recordings were made by piping web audio through the Soundflowerbed (Cycling '74 & Rogue Amoeba) audio routing tool directly into the Audacity (The Audacity Team) sound recorder. Only the first 30 seconds of audio from each user-posted video was recorded. All files were saved in mp3 format.

Corpus Analysis

After the 10 original songs (obtained from Rhapsody), and the 100 user-posted recordings (obtained from YouTube) were collected, they were then analyzed using Sonic Visualiser (Cannam, Landone, & Sandler, 2010), an open source application for analyzing the contents of audio files. Sonic Visualiser uses Vamp plug-ins to analyze and annotate audio, and for this particular analysis, two Vamp plug-ins were employed: "Chordino: Chord Estimate" (Mauch & Dixon, 2010), for determining the root pitch of the first chord in each audio file, and "Simple Fixed Tempo Estimator" (Cannam), for determining the tempo of each musical excerpt based on information within the first 10 seconds of the audio file. Key and tempo measurements for each YouTube user-posted video were compared to the measurements of the original audio track (obtained from Rhapsody). A priori, it was decided to operationally define user-manipulations of pitch whenever songs were transposed by at least +/– 1 semitone. User manipulations of tempo were defined to occur whenever the tempo measurements of the original and user-posted video differed by at least 3 beats per minute (bpm). In order to verify the accuracy of the measurements, all videos that were flagged as having a user manipulation of transposition or tempo were verified by the experimenter. Pitch transpositions were verified by checking the key area of each tune against tones played on a synthesizer (SimpleSynth). Tempo alterations were verified by aligning the starting point of both the original and user-posted versions of each track within Audacity, and then ensuring that the tempo had been increased or decreased on the basis of the recordings moving out of phase.

Results

Of the 100 user-posted videos that were collected, analyzed, and verified, 42 were found to contain a nominal change in either pitch (+/– 1 semitone) or tempo (+/– 3 bpm). Of the 36 recordings that contained a change in the pitch, 13 recordings were transposed up one semitone, 19 recordings were transposed down 1 semitone, and 4 recordings were transposed down 2 semitones. Of the 22 recordings that contained a nominal change in tempo, 13 involved a tempo increase, and 9 contained a tempo decrease. A number of videos contained both a change in pitch and a change in tempo. In particular, it was of interest to determine if these changes were the result of a simple change of speed, in which tempo and pitch would both increase or decrease together, or the result of a more sophisticated manipulation in which tempo and pitch were altered independently. Within the corpus, 13 videos contained a nominal change of pitch and tempo, and within these results, 10 changes were consistent with the "tape speed change" method. Results from this initial study are plotted as bar graphs in Figure 1.

Discussion

In gathering and analyzing this initial corpus of user-posted videos, there was some hope that larger follow-up studies could be quickly analyzed using various Vamp plug-ins. However, through the process of analyzing and verifying the results of the two plug-ins used in this study, several concerns were noted. Data from the Chordino plug-in were quite good. There was only one instance (less than 3% of the measurements) in which Chordino's analysis was found to be erroneous, and to be fair, this case involved a recording with a pitch transposition between 1 and 2 semitones. Chordino labeled the transposition as two semitones lower, whereas the experimenter found the transposition to be closer to a single semitone lower (and so it was recorded as such). This exception raised two noteworthy points. First, there is no reason that user-posted alterations of pitch need to be made in units of discrete semitones, and therefore, it is plausible that the corpus contained a greater number of pitch transpositions (e.g. transpositions smaller than a semitone) than reported above. Secondly, the Chordino plug-in is an acceptable tool for detecting changes of pitch transposition, but analytical performance might be considered too coarse to truly answer the research question of interest. Manually checking the output of the Chordino plug-in proved to be time-consuming, but at the same time, necessary for the present investigation.

The second plug-in used in this study, the "Simple Fixed Tempo Estimator," performed substantially worse than Chordino. Of the 22 detected tempo changes, 6 of these measurements (27%) indicated the wrong manipulation directionality (e.g. indicating a faster tempo that was later verified to be slower). Further, the plug-in also flagged an additional 6 samples as having a tempo change that could not be manually verified using the alignment procedure described above. Analyzing tempo information is a complex task, with textural differences yielding unique results for what actually constitutes as the musical "beat." However, given the similarity between the stimuli being measured and compared, the results were disappointing. Other researchers using this particular tool for audio analysis are encouraged to verify at least a small subset of their results for accurate measurements.

Within the collected corpus of user-posted YouTube videos, it was a surprise to discover that altered videos were almost as common as unaltered recordings. While many motivations are possible and could be discussed here, a single explicit motivation can be shared. Throughout the collection of this corpus, a few YouTube users explicitly stated that they had shifted the pitch or tempo of the original audio track "in order to avoid copyright" issues. The truthfulness of the user's comments, which appeared in various locations (within the video, within the video description, within user-posted comments, etc.), might be assumed, but the legal protection afforded by making such changes is debatable. Perhaps a more appropriate description of the listeners' motivation would be the desire to avoid having the copyrighted material in their videos from being "automatically" detected by YouTube. Via a YouTube system known as Content ID, all new YouTube content is scanned against a large database of files to ensure that the content does not contain copyrighted material. If a violation is detected, the owner of the copyrighted content may proceed in different ways, including muting the audio portion of the track, blocking the video entirely, or by collecting money from the video through online advertisements. According to YouTube, the Content ID system scans over one hundred "years" of audio/video every day (support.google.com/youtube). Whether or not simple pitch and tempo transpositions are actually effective in avoiding detection by YouTube's Content ID is a separate question beyond the scope of this research. The prevalence of user-posted altered videos, along with tutorial videos (on YouTube) that explicitly instruct others how to avoid detection of the Content ID system, suggested that many of the alterations in the initial corpus reported here were motivated as such. Another potential explanation for the large number of altered user-posted videos on YouTube could stem from "copycat" syndrome, in which YouTube users make copies of other user-posted videos that had not been flagged by the Content ID system. In these cases, it is possible that some YouTube users may not have been aware that their shared content was in some way altered.

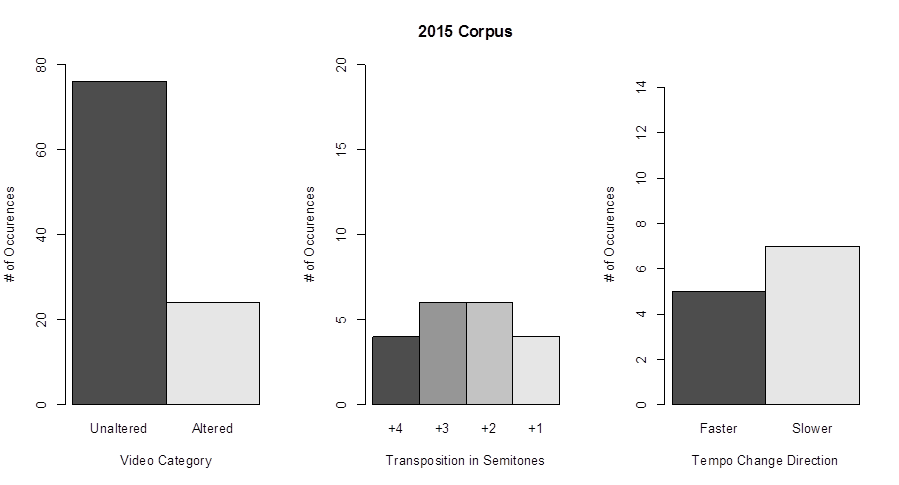

Study #2: 2015 Corpus

Since the initial corpus was collected in 2011, user-posted YouTube videos have personally proved to be useful for answering quick empirical questions about pitch transpositions. In 2012, an unpublished corpus study of videos sung by men and women found that female voices were more likely to be pitch shifted upwards. In 2013, another YouTube corpus sought to determine if songs with aggressive lyrics were more likely to be pitch shifted up or down (the results were inconclusive). Further, a recent report looked at the various techniques used by YouTube users for mocking artists via alterations of original YouTube videos (Plazak, 2015). Over the course of these four years, anecdotally, it seemed to be more difficult to find altered versions of user-posted videos. In order to determine if videos in 2015 were more or less likely to be altered, a replication of the initial 2011 corpus study was undertaken.

Methods

The methodology for the replication study was the same as the initial study. The commercial music service Rhapsody no longer offered a Top 10 songs playlist, and so the 10 songs used in the replication study were taken from the iTunes Top 10 list for the week of September 21, 2015 (see appendix for track list). Again, user-posted videos were searched using the term <artist name> <song title> "lyrics;" the same exclusion criteria were applied within the replication corpus. An identical process and the same software were used to both record and analyze the data. Two songs on the iTunes top 10 list had fewer than 10 user-posted videos to collect. Amongst a number of potential reasons, this could have been due to the songs being relatively new. In order to collect a full set of 100 user posted videos, songs with fewer than 10 user-posted videos were omitted from the study, and instead the next few songs on the iTunes top tracks list were included (the full list of included and excluded songs is listed in the appendix).

Results

Of the 100 user-posted videos that were collected in the replication study, 24 were found to contain a nominal change in either pitch (+/– 1 semitone) or tempo (+/– 3 bpm). All of the 20 recordings that contained a change in the pitch involved an upwards transposition: 4 recordings were transposed up one semitone, 6 recordings were transposed up by 2 semitones, 6 recordings were transposed up by 3 semitones, and 4 recordings were transposed up by 4 semitones. Of the 12 recordings that contained a nominal change in tempo, 5 involved a tempo increase, and 7 contained a tempo decrease. Eight altered tracks contained both a nominal change of pitch and tempo, and within these results, 3 of these changes were consistent with the "tape speed" method. Results from this replication study are plotted as bar graphs in Figure 2.

Discussion

As predicted, the 2015 corpus of user-posted YouTube recordings contained fewer instances of pitch and tempo manipulations, however, these alterations were still evident in the dataset. One potential explanation for this observation could be that more and more copyright holders are choosing to monetize from copyright infringing videos rather than blocking them entirely. In this were true, one would expect to find more advertisements embedded into unaltered videos, and fewer advertisements embedded into videos containing pitch and tempo alterations. Unfortunately, advertisements at the beginning of user-posted videos were not recorded, thus leaving this hypothesis unanswered without further testing. Also, none of the pitch alterations in the 2015 corpus were downward transpositions. This is in strong contrast to the 2011 corpus, in which the majority of pitch alterations involved downward transpositions.

The differences between the 2011 and 2015 corpora also highlight a unique feature about contemporary corpus research: one should never assume that the phenomena of interest will persist indefinitely. While it is not possible to generalize the results from two small corpora alone, if user-posted manipulations of YouTube videos are in fact becoming less common, then the window of opportunity for gathering this type of data may soon be closed. This is in stark contrast to other types of musical corpora in which both recorded and notated musical works are actively archived and preserved. Observations of listener-manipulations of music can only be captured in the potentially short amount of time in which users feel obliged to share such content. Future research might investigate the average "age" of a YouTube video before deletion in order to help quantify the actual window of opportunity for preserving user-posted videos.

The results presented here problematize a basic assumption about music perception, namely that musical works are repeatedly heard at the same pitch transposition level. Jakubowski and Müllensiefen (2013) posited that observations of poor absolute pitch memory (relative to earlier studies) may have been due to the popularity of listening to music via YouTube, and the prevalence of user-posted YouTube video alterations. Further research is needed to investigate the implications of "learning" a musical work in multiple, closely located, pitch transpositions. Recall Schellenberg and Trehub's (2003) finding that absolute pitch memory is poor for unknown works. In cases of "learning" multiple transposed versions of a song via altered user-posted videos, the "true" pitch transposition of a tune could in fact be unknown, and thus could lead to relatively poor absolute pitch memory. Further, during the time of Jakubowski and Müllensiefen's data collection, it is likely that user-posted YouTube alterations were quite common.

In general, this corpus may highlight an important evolutionary branching of the "listener." In many musical communication models, such as Kendall and Carterette's (1990) Composer-Listener-Performer model, the listener is often considered the simple endpoint of the musical communication chain. However, user-posted YouTube videos reveal that end users in fact perform alterations of standard musical features, such as key and tempo, that were once only manipulated by composers and performers. Extending Kendall and Carterette's ideas on the importance of musical context, it is important to note that such user-posted alterations are happening within a specific listener context and likely for specific goals. Perhaps most fascinating, is a potential code that seems to have been developed since the initial 2011 YouTube corpus was collected. Specifically, a number of user-posted videos seem to be using obvious alterations of copyrighted content. For example, YouTube users were sometimes very explicit about their alterations. A few videos from the 2015 corpus stated that the recording speed had been increased by 25%. By providing such information, it then becomes possible for other users to reverse these alterations in order to obtain the original source material, similar to a form of encryption that YouTube's Content ID system doesn't understand. In more extreme cases, some users share "backwards" versions of songs, which can quickly be recognized and decoded by listeners with very basic digital audio tools. These examples mark fascinating case studies of what listeners are currently doing to share content.

Digital audio offers a number of unprecedented possibilities for researchers interested in corpus research. Tools that are used to analyze digital audio will certainly open up possibilities for asking larger questions, but before they do, more research is needed to verify the accuracy of the data that they produce. The "Simple Fixed Tempo Estimator" plug-in produced a number of erroneous results in both of the studies presented here. Of the 12 user-posted videos flagged as having a tempo change in the 2015 corpus, 3 (25%) measurements indicated the wrong tempo alteration directionality, and 14 videos were analyzed as having a nominal tempo change when none was present. Some variation amongst audio analysis tools should be expected, and therefore, it might be beneficial for future research to examine differences between various digital audio toolkits (e.g. Douglas, 2014).

In summary, this study presented data from two corpora that each contained 100 user-posted YouTube videos that were culled with the intent of observing the prevalence of pitch and tempo alterations in shared online content. Such alterations were evident in both the 2011 and 2015 corpora—the former more so than the latter. The results reported here, while basic, have implications for theoretical models of musical communication in which the listener is modeled as a passive receiver. Further, results from this study stress the importance of verifying analysis data from digital audio toolkits. Automated tools are invaluable for addressing large research questions, but automation also involves an inherent potential for error. Finally, this corpus study was unique in that it captured a novel musical behavior with a limited window of observability. Those interested in pursing similar research questions via YouTubeology are encouraged to capture their data of interest while it still exists.

NOTES

-

Correspondence can be addressed to: Joseph Plazak, Illinois Wesleyan University School of Music, P.O. Box 2900, Bloomington, IL 61702-2900, jplazak@iwu.edu.

Return to Text

REFERENCES

- Bharucha, J., & Krumhansl, C. L. (1983). The representation of harmonic structure in music: Hierarchies of stability as a function of context. Cognition, 13(1), 63-102. http://dx.doi.org/10.1016/0010-0277(83)90003-3

- Cannam, C., Landone, C., & Sanler, M. (2010). Sonic Visualiser: an open source application for viewing, analysing, and annotating music audio files. In A. Del Bimbo, S.F. Chang, & A. Smeulders (Eds.), Proceedings of the 18th ACM Multimedia 2010 International Conference (pp 1467-1468). New York: Association for Computing Machinery. http://dx.doi.org/10.1145/1873951.1874248

- Cayari, C. (2011). The YouTube effect: How YouTube has provided new ways to consume, create, and share music. International Journal of Education & the Arts, 12(6), 1-30.

- Douglas, C. (2014). Perceived affect of musical instrument timbres. Unpublished Thesis, McGill University.

- Dowling, W. J., & Fujitani, D. S. (1971). Contour, interval, and pitch recognition in memory for melodies. The Journal of the Acoustical Society of America, 49(2B), 524-531. http://dx.doi.org/10.1121/1.1912382

- Edworthy, J. (1985). Interval and contour in melody processing. Music Perception, 2(3), 375-388. http://dx.doi.org/10.2307/40285305

- Gooding, L. F., & Gregory, D. (2011). Descriptive analysis of YouTube music therapy videos. Journal of music therapy, 48(3), 357-369. http://dx.doi.org/10.1093/jmt/48.3.357

- Grossman, L. (2006). You—yes, you—are Time's Person of the Year. Time. URL: http://content.time.com/time/magazine/article/0,9171,1570810,00.html

- Halpern, A.R. (1989). Memory for the absolute pitch of familiar songs. Memory and Cognition, 17(5), 572-581. http://dx.doi.org/10.3758/BF03197080

- Jakubowski, K., & Müllensiefen, D. (2013). The influence of music-elicited emotions and relative pitch on absolute pitch memory for familiar melodies. The Quarterly Journal of Experimental Psychology, 66(7), 1259-1267. http://dx.doi.org/10.1080/17470218.2013.803136

- Jenkins, H. (2006). Convergence culture: Where old and new media collide. New York: NYU press.

- Kendall, R. A., & Carterette, E. C. (1990). The communication of musical expression. Music Perception, 8(2), 129-163. http://dx.doi.org/10.2307/40285493

- Mauch, M., & Dixon, S. (2010). Simultaneous estimation of chords and musical context from audio. Audio, Speech, and Language Processing, IEEE Transactions on Audio, Speech, and Language Processing, 18(6), 1280-1289. http://dx.doi.org/10.1109/TASL.2009.2032947

- Levitin, D.J. (1994). Absolute memory for musical pitch: Evidence from the production of learned melodies. Perception and Psychophysics, 56(4), 414-423. http://dx.doi.org/10.3758/BF03206733

- Plazak, J. (2015). Listener-senders, musical irony, and the most "disliked" YouTube videos. In K.L. Turner (Ed.), This is the Sound of Irony: Music, Politics and Popular Culture. Burlington, VT: Ashgate Publishing.

- Prellwitz, M., & Nelson, M. L. (2011). Music video redundancy and half-life in YouTube. In Research and Advanced Technology for Digital Libraries (pp. 143-150). Springer Berlin Heidelberg. http://dx.doi.org/10.1007/978-3-642-24469-8_16

- Schellenberg, E. G., & Trehub, S. E. (2003). Good pitch memory is widespread. Psychological Science, 14(3), 262-266. http://dx.doi.org/10.1111/1467-9280.03432

- Thelwall, M., Sud, P., & Vis, F. (2012). Commenting on YouTube videos: From Guatemalan rock to el big bang. Journal of the American Society for Information Science and Technology, 63(3), 616-629. http://dx.doi.org/10.1002/asi.21679

- Warren, R. M., Gardner, D. A., Brubaker, B. S., & Bashford Jr, J. A. (1991). Melodic and nonmelodic sequences of tones: Effects of duration on perception. Music Perception, 277-289. http://dx.doi.org/10.2307/40285503

Appendix

| Track list from the initial 2011 study | Track list from the 2015 replication study |

| Adele, Someone Like You | Drake, Hotline Bling |

| Adele, Rolling in the Deep | Justin Bieber, What Do You Mean? |

| Foster the People, Pumped Up Kicks | Shawn Mendes, Stitches |

| Maroon 5, Moves Like Jagger | R. City, Locked Away |

| Lil Wayne, She Will | Elle King, Ex's and Oh's |

| Lil Wayne, Blunt Blowin' | The Weeknd, Can't Feel My Face |

| Adele, Set Fire to the Rain | Silento, Watch Me (Whip/Nae Nae) |

| Lil Wayne, How to Love | Meghan Trainor, Like I'm Gonna Lose You |

| Nikki Minaj, Super Bass | Luke Bryan, Strip It Down* |

| LMFAO, Party Rock Anthem | Charlie Puth, Marvin Gaye |

| One Direction, Drag Me Down* | |

| Ellie Goulding, On My Mind | |

| *Excluded tracks |